China Could Abuse Apple’s Child Porn Detection Tool, Experts Say

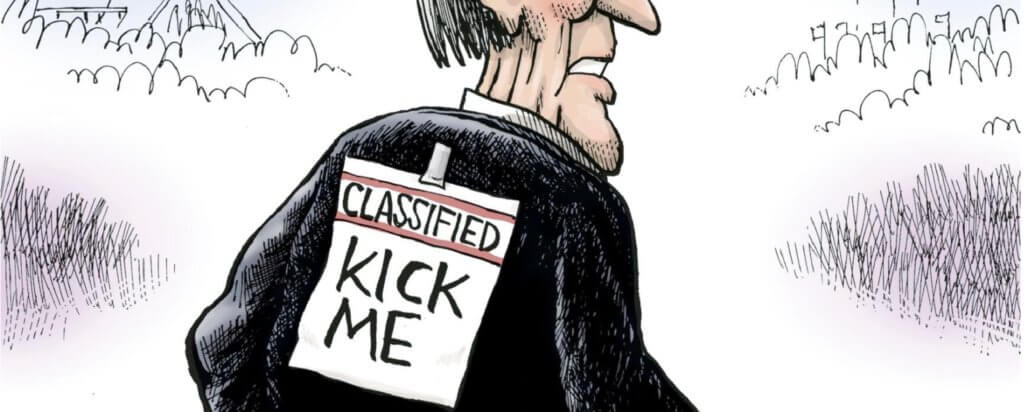

Privacy advocates are warning that China and authoritarian governments could use Apple’s child pornography detection tool to hunt down political dissidents.

Apple announced last week it will begin scanning user photos stored on iCloud for material found in a database of “Child Sexual Abuse Material.” The company claims this system will protect user privacy by scanning phones directly, rather than moving data to hackable outside servers. But privacy advocates say foreign governments could hijack the tool to track down any material it finds objectionable.

Once the detection tool takes effect, Apple will “be under huge pressure from governments to use this for purposes other than going after” child pornography, said the Lincoln Network’s Zach Graves. “It’s not that hard to imagine Beijing saying, ‘you’re going to use this for memes that criticize the government.’”

Apple maintains a good relationship with China, a major consumer market that houses much of the company’s supply chain. The company has used Chinese slave labor to produce its products and lobbied against legislation that would restrict imports that involve forced labor. Apple also stores the personal data of Chinese users on state-owned computer servers.

Tech companies such as Google already scan user content for child pornography. But Apple is the first to embed that function directly into users’ devices. Apple’s method relies on visual hashes, unique representations of photographs. The tool matches hashes on user photos against a database of Child Sexual Abuse Material compiled by the National Center for Missing and Exploited Children and other child safety organizations. – READ MORE

Responses